Accidentally Rebuilding HR (Without the H)

The agent era is really a governance era. Most organizations aren't ready for that sentence.

A couple of mornings ago I was getting my usual at Philz (Jacob’s Wonderbar, for those curious) on a cloudy San Francisco morning, and taped to the lamppost right outside was a poster for something called MCP Night, with “Agent Mode” in small text along the bottom.

We used to see comedy night posters on that lamppost. Now it’s MCP Night. I don’t know, I found that funny. Not funny ha-ha, more funny in the way that signals something real is shifting when you weren’t quite looking.

I’ve been thinking about what exactly is shifting.

A year ago the conversation was copilots. AI riding shotgun, helping you go faster, you still holding the wheel. The mental model was fundamentally one of assistance, a very smart autocomplete that had learned to reason, handed to a human who remained the principal actor in any meaningful sense. Organizations bought it on that premise. Vendors sold it on that premise. The AI helped. You decided.

Somewhere in the last six months that frame quietly broke, and nobody quite announced when it happened. The companies building at the frontier aren’t optimizing the assistant anymore. They’re building onboarding flows and permission architectures and memory systems and escalation paths, making decisions that have historically belonged to managers and HR departments: how much autonomy does a new team member get before they’ve earned trust, what happens when they make a consequential mistake, who do they report to, how does the organization know what they’re doing when nobody’s watching.

We moved from copilot to coworker without pausing to notice the transition, which is either impressive or alarming depending on how you look at it.

Aaron Levie, who has been thinking about enterprise software longer and more clearly than almost anyone, put the implementation reality plainly recently. When you move from a chat paradigm to agents that participate in meaningful workflows, enterprises face a genuine cascade of hard problems. You have to modernize legacy infrastructure so agents can reach the data that actually contains institutional context, decades of it in many cases, sitting in systems that were never built with non-human actors in mind. You need access controls and entitlements scoped correctly for those actors. You need processes documented in a form agents can actually use, which is different from the form humans use them, which is often not documented at all. You need to redesign workflows so humans and agents divide the labor in a way that compounds rather than just replicates the old arrangement. You need evals for the new end states. And then you need to keep up with architectural shifts moving faster than any enterprise change management process was built to handle.

He’s right that the volume of work this represents will exceed anything we currently imagine. But what he’s pointing at underneath the list is something older and harder than any individual line item.

The processes agents most need to learn are the ones nobody wrote down. They live as workarounds that became standard practice, as institutional habits that formed around a tool that no longer exists, as the thing a new employee figures out after six months of making the wrong kind of mistakes and getting quietly corrected by someone who absorbed the lesson the same way. That knowledge doesn’t live in your data systems. It doesn’t live in your documentation. It lives in the friction, in the small resistances and redirects that encode what the organization learned, often painfully, about how work actually moves through it.

Agents skip the friction. That’s the product. That’s what makes them fast. And it’s also, if you’re not careful, what makes them corrosive in ways that don’t surface until something breaks that you didn’t know needed protecting.

The uncomfortable implication is that agents won’t just remove inefficiency. They will are designed to remove the parts of the system that look like inefficiency but are actually safeguards. The second reviewer who slows things down. The extra approval step that catches edge cases once a quarter. The quiet correction that never made it into any documentation because the person giving it didn’t think it needed to be written down. These are not artifacts of bad organizational design. They are the organizational immune system, and they are invisible right up until the moment they’re gone.

I want to be careful here, because there’s a harder version of this argument I’m not fully dwelling into in this piece, but I want to at least give it the gravitas it deserves. Replacing a slow human reviewer with a continuous evaluation loop doesn’t automatically preserve what made the human review valuable. A loop can be fast and still be shallow. It catches what it was designed to catch, and only that. The human reviewer who slows things down is also sometimes the one who notices the thing nobody thought to measure. That asymmetry matters, and the organizations that treat instrumentation as a straight substitute for judgment are going to find that out the hard way.

So here is where most people land, and where I landed too for a while: if agents are genuinely becoming coworkers, then what we need to build is the organizational equivalent of HR for them. The infrastructure that answers questions like what does this agent know, where did it learn it, who can see what it’s doing, how does it escalate when it’s uncertain, how does the organization learn from what it does.

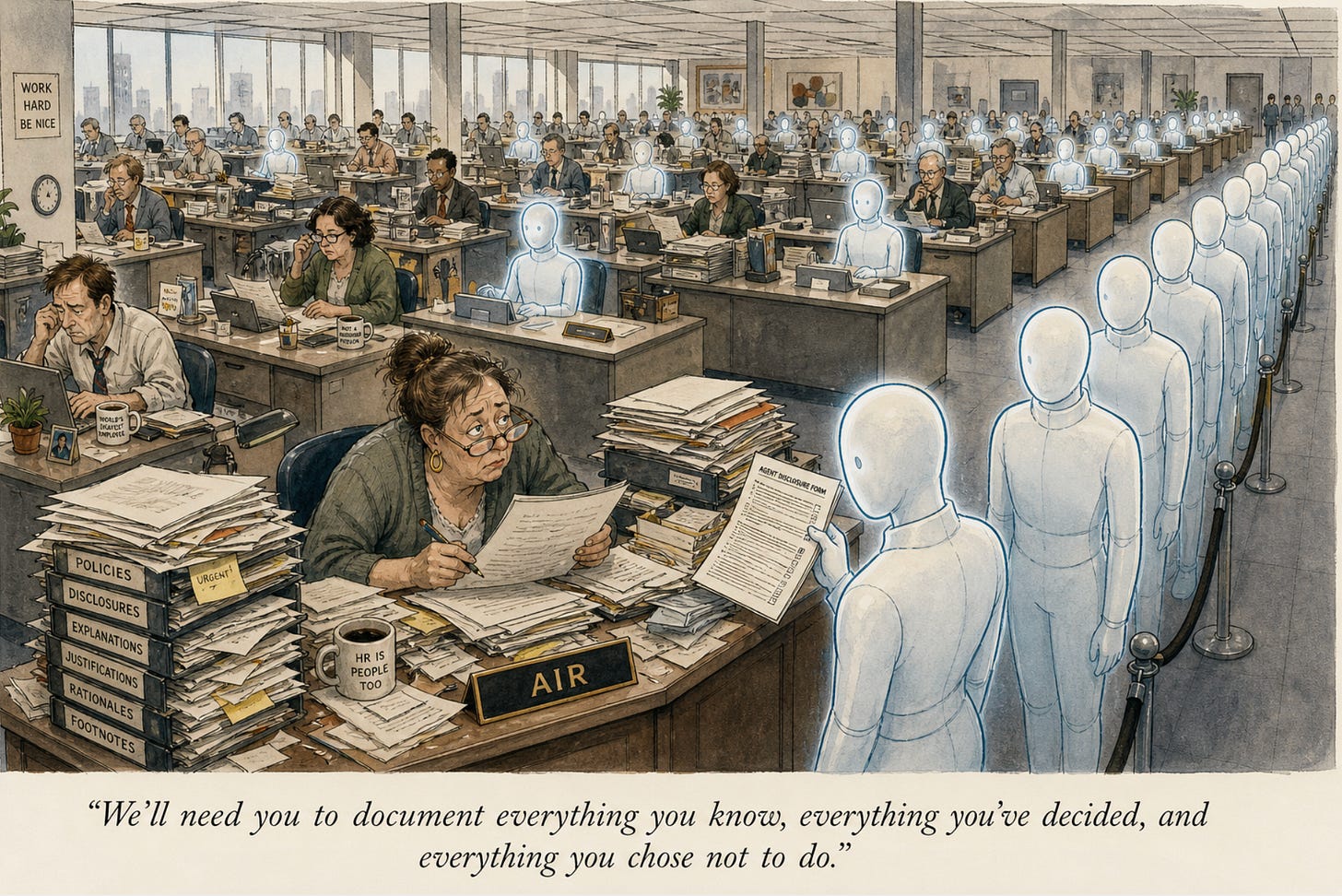

We’re accidentally rebuilding HR. That’s the realization. Organizations deploying agents at scale are reconstructing, from scratch and mostly without noticing, the entire apparatus that humans built over decades to integrate new workers into functioning institutions.

But then you sit with it for a second and the framing starts to crack.

HR stands for Human Resources. And the H is so deeply significant in a way that matters for all of us. The entire apparatus, from onboarding to performance management to offboarding, was built around the specific nature of human workers. Human memory, human motivation, human legal standing, human error modes, the assumption underneath all of it that the worker you’re integrating has a particular kind of inner life and a particular kind of relationship to accountability.

Agents have none of that. Which means we are not rebuilding HR. We are building something that has the same shape as HR, serves the same organizational function as HR, and will eventually be as invisible and essential as HR, but is not HR because the worker it serves is not human.

What we are building is AIR. Artificial Intelligence Resources.

The metaphor is doing more work than it might look like at first. Air is not a tool. Air is the infrastructure that living things need not to perform a task but simply to exist and keep existing. You don’t think about it until something needs it and doesn’t have it. An organization deploying agents without AIR is not deploying agents badly, it’s deploying agents into an environment that wasn’t built to sustain them, and eventually something will fail in a way that traces back not to what the agent couldn’t do but to what the organization never built around it.

In practice AIR won’t look like HR software with a new label. It will look like continuous evaluation loops instead of periodic reviews, permission graphs that reflect actual workflow rather than static org chart roles, and context trails that make every consequential decision auditable after the fact. Less policy, more instrumentation. The goal is not to constrain agents but to make them legible, to the organization and to each other, in ways that let trust compound rather than erode.

Here is the part that rarely gets said in San Francisco: the organizations best positioned to build this aren’t necessarily the ones at MCP Night. They’re the banks and insurance companies and regulated industries that never had the luxury of moving fast and breaking things. They’ve spent forty years making their processes legible, not for agents, but for auditors, regulators, and the next crisis they knew was coming eventually. They call it governance. They call it compliance. They built documentation as safeguard long before anyone was thinking about AI readiness. The infrastructure they need to extend for agents is closer to finished than anything a startup is building from scratch. The lagging edge, it turns out, has been quietly practicing.

MCP Night, in this light, is a community constructing its own version of air in public. Shared protocols, shared architectural norms, shared vocabulary for what it means to give an agent coherent and governable access to a system. The poster on the lamppost outside Philz is, in its way, a recruiting event for a new kind of worker that the recruiting infrastructure doesn’t yet have a name for. It just isn’t the only place where that work is happening.

We spent the last two years asking what AI can do. The next two are going to be spent asking what organizations need to become in order for AI to do it well, safely, and in a way that compounds rather than quietly erodes what the organization already knows.

Most organizations are not ready for that question. The gap between what agents require and what enterprises can actually provide is where most of the real damage will happen, quietly, before anyone names it. That’s not pessimism. It’s just the honest version of why this work matters.

The next generation of enterprise builders won’t be the ones who ship better agents. It will be the ones who understand how to make organizations legible enough for agents to operate inside them without breaking them.

That’s what building the air means.

Though what we build it from, and which parts of the organization we choose not to make legible, turns out to matter just as much. That’s a longer conversation.